Lecturer: Ioannis Pitas (pitas@csd.auth.gr), Aristotle University of Thessaloniki, Greece

Tutorial Summary

Drone vision plays a pivotal role in drone perception/control, because: a) it enhances flight safety by drone localization/mapping, obstacle detection and emergency landing detection; b) performs quality visual data acquisition, particularly in drone media production applications; and c) allows powerful drone/human interactions, e.g., through automatic event detection, gesture control, person/crowd avoidance. The use of multiple drones (drone swarms) is an important new trend in several application areas, as it enables: a) drone mission speedup by task distribution to drones (e.g., for terrain/infrastructure surveillance), b) simultaneous information acquisition (e.g., multi-view infrastructure inspection), c) support of heterogenous drones (e.g., sensing and communication/tethering ones). Such systems should be able to survey/inspect big areas over large expanses, ranging, for example, from a stadium to an entire city or a highway or an electric power line and/or to cover outdoor events. The drone or drone team should have: a) increased multiple drone decisional autonomy, hence allowing mission time of at least one hour in an outdoor environment (possibly through drone swapping) and b) improved multiple drone robustness and safety mechanisms (e.g., communication robustness/safety, embedded flight regulation compliance, enhanced crowd avoidance and emergency landing mechanisms), enabling it to carry out its mission against errors or crew inaction and to handle emergencies. Such robustness is particularly important, if the drones will operate close to crowds and/or may face environmental hazards (e.g., wind). Therefore, it must be contextually aware and adaptive. Drone vision and machine learning play a very important role towards this end, covering the following topics: a) semantic world mapping b) multiple drone and multiple target localization, c) drone visual analysis for target/obstacle/crowd/point of interest detection, d) 2D/3D target tracking. Finally, embedded on-drone vision (e.g., tracking) and machine learning algorithms are extremely important, as they facilitate drone autonomy, e.g., in communication-denied environments.

The tutorial will offer a) an overview of all the above plus other related topics and will stress the related algorithmic aspects, such as: b) drone localization and world mapping, c) target detection d) target tracking and 3D localization. Some issues on embedded CNN and fast convolution computing will be overviewed as well.

Lecturer

Prof. Ioannis Pitas (IEEE Fellow, IEEE Distinguished Lecturer, EURASIP Fellow) received the Diploma and PhD degree in Electrical Engineering, both from the Aristotle University of Thessaloniki (AUTH), Greece. Since 1994, he has been a Professor at the Department of Informatics of the same University. He served as a Visiting Professor at several Universities.

His current interests are in the areas of image/video processing, machine learning, computer vision, intelligent digital media, human-centered interfaces, affective computing, 3D imaging and biomedical imaging. He has published over 1138 papers, contributed in 50 books in his areas of interest and edited or (co-)authored another 11 books. He has also been member of the program committee of many scientific conferences and workshops. In the past he served as Associate Editor or co-Editor of 9 international journals and General or Technical Chair of 4 international conferences. He participated in 70 R&D projects, primarily funded by the European Union and is/was principal investigator/researcher in 42 such projects. He has 31000+ citations to his work and h-index 82+ (Google Scholar).

Prof. Pitas lead the big European H2020 R&D project MULTIDRONE: https://multidrone.eu/ and is AUTH principal investigator in H2020 R&D projects Aerial Core https://aerial-core.eu/ and AI4Media. He is chair of the Autonomous Systems initiative https://ieeeasi.signalprocessingsociety.org/.

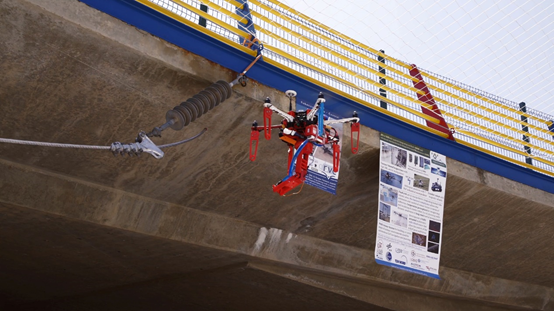

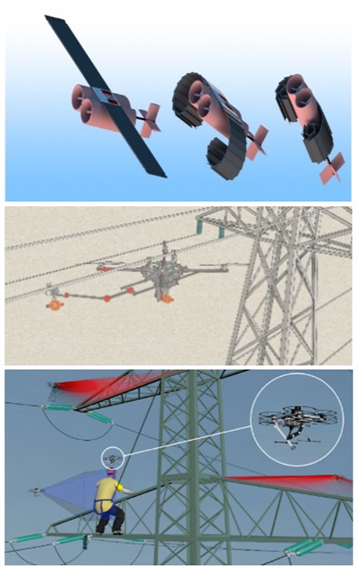

(courtesy of H2020 AERIAL-CORE R&D project) .

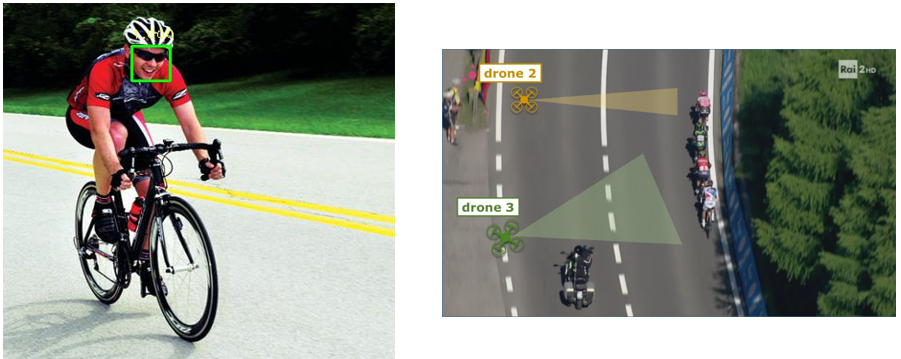

(courtesy of H2020 Multidrone R&D project) .

Tutorial Online

The tutorial will consist of 4 talks, as detailed below:

1. Introduction to drone imaging

This lecture will provide the general context for this new and emerging topic, presenting the aims of drone vision, the challenges (especially from an image/video analysis and computer vision point of view), the important issues to be tackled, the limitations imposed by drone hardware, regulations and safety considerations etc.

A multiple drone platform will be also detailed, beginning with platform hardware overview, issues and requirements and proceeding by discussing safety and privacy protection issues. Finally, platform integration will be the closing topic of the lecture, elaborating on drone mission planning, object detection and tracking, target pose estimation, potential landing site detection, semantic map annotation and simulations.

2. Semantic world mapping and drone localization

Abstract: The lecture includes the essential knowledge about how we obtain/get 2D and/or 3D maps that robots/drones need, taking measurements that allow them to perceive their environment with appropriate sensors. Semantic mapping includes how to add semantic annotations to the maps such as POIs, roads and landing sites. 3D (possibly 6D) localization refes to either multiple drone and/or multiple target location and pose estimation based on multiple (also multiview) sensors, using specifically Simultaneous Localization and Mapping (SLAM). Finally, drone localization fusion allows localization and mapping accuracy improvement in to exploit the synergies between different (also multiview) sensors.

3. Deep learning for target detection

Abstract: Target detection using deep neural networks, Detection as search and classification task, Detection as classification and regression task, Modern architectures for target detection, RCNN, Faster RCNN, YOLO, SSD, lightweight architectures, Data augmentation, Deployment, Evaluation and benchmarking.

Utilizing Machine Learning techniques can assist in detecting objects of importance and subsequently tracking them. Hence, Visual Object Detection can greatly aid the task of target detection/tracking/localization/following using drones. Drones with Graphics Processing Units (GPUs) in particular can be aided by Deep Learning techniques, as GPUs routinely speed up common operations such as matrix multiplications. Recently, Convolutional Neural Networks (CNNs) have been used for the task of object detection with great results. However, using such models on drones for real-time target detection is prohibited by the hardware constraints that drones impose. Various architectures and settings will be reviewed that facilitate the use of CNN-based object detectors on a drone with limited computational capabilities. Applications will be presented for a) infrastructure (electric power line) inspection and b) sports filming.

4. 2D target tracking and 3D target localization

Abstract: 2D target tracking is a crucial component of many computer vision systems. Many approaches regarding person/object detection and tracking in videos have been proposed. In this lecture, video tracking methods using correlation filters or convolutional neural networks are presented, focusing on video trackers that are capable of achieving real time performance for long-term tracking on a UAV platform. Multiview target detection and tracking will be presented as well. Finally, 3D target localization, possibly using available semantic 3D world maps will be overviewed. 3D target localization using other modalities (e.g., on-target GPS receivers) and their fusion will be investigated.

2D convolutions are the basic tool in convolutional neural networks (CNNs) and in several computer vision tasks, notably for object detection and tracking. As 2D convolutions and correlations are particularly demanding computationally, fast 2D convolutions algorithms will be overviewed as well.

Intended audience

The target audience will be researchers, engineers and computer scientists working in the areas of computer vision, machine learning, image and video processing and analysis that would like to enter in the new and exciting field of drone visual information analysis and processing for surveillance applications.

Related Publications

- AERIAL-CORE R&D Project (AERIAL COgnitive integrated multi-task Robotic system with Extended operation range and safety) funded by the EU (2019-2), within the scope of the H2020 framework. URL: https://aerial-core.eu/

- Multidrone R&D Project (MULTIple DRONE platform for media production), funded by the EU (2017-19), within the scope of the H2020 framework. URL: https://multidrone.eu/

- Karakostas, I. Mademlis, N.Nikolaidis and I.Pitas, “Shot Type Constraints in UAV Cinematography for Autonomous Target Tracking”, Information Sciences (Elsevier), vol. 506, pp. 273-294, 2020

- Mademlis, V. Mygdalis, N.Nikolaidis, M. Montagnuolo, F. Negro, A. Messina and I.Pitas, “High-Level Multiple-UAV Cinematography Tools for Covering Outdoor Events”, IEEE Transactions on Broadcasting, vol. 65, no. 3, pp. 627-635, 2019

- Mademlis, N.Nikolaidis, A.Tefas, I.Pitas, T. Wagner and A. Messina, “Autonomous UAV Cinematography: A Tutorial and a Formalized Shot-Type Taxonomy”, ACM Computing Surveys, vol. 52, issue 5, pp. 105:1-105:33, 2019

- Papaioannidis and I.Pitas, “3D Object Pose Estimation using Multi-Objective Quaternion Learning”, IEEE Transactions on Circuits and Systems for Video Technology, 2019

- Mygdalis, A.Tefas and I.Pitas, “Exploiting Multiplex Data Relationships in Support Vector Machines”, Pattern Recognition (Elsevier), vol. 85, pp. 70-77, 2019

- Regazzoni and I.Pitas, “Perspectives in Autonomous Systems Research”, Signal Processing Magazine, vol. 36, no. 5, pp. 147-148, 2019

- Mademlis, N. Nikolaidis, A. Tefas, I. Pitas, T. Wagner and A. Messina, “Autonomous Unmanned Aerial Vehicles Filming in Dynamic Unstructured Outdoor Environments”, IEEE Signal Processing Magazine, vol. 36, no. 1, pp. 147-153, 2019

- Patrona, I. Mademlis, A.Tefas and I.Pitas, “Computational UAV Cinematography for Intelligent Shooting Based on Semantic Visual Analysis”, Proceedings of the IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 2019

- Chatzikyriakidis, C. Papaioannidis and I.Pitas, “Adversarial Face De-Identification”, Proceedings of the IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 2019

- Kakaletsis, M. Tzelepi, P. I. Kaplanoglou, C. Symeonidis, N.Nikolaidis, A.Tefas and I.Pitas, “Semantic Map Annotation Through UAV Video Analysis Using Deep Learning Models in ROS”, Proceedings of the International Conference on MultiMedia Modeling (MMM), Thessaloniki, Greece, 2019

- Karakostas, I. Mademlis, N.Nikolaidis and I.Pitas, “Shot Type Feasibility in Autonomous UAV Cinematography”, Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 2019

- Nousi, A.Tefas and I.Pitas, “Deep Convolutional Feature Histograms for Visual Object Tracking”, Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 2019

- Karakostas, V. Mygdalis, A.Tefas and I.Pitas, “On Detecting and Handling Target Occlusions in Correlation-Filter-based 2D Tracking”, Proceedings of the 27th European Signal Processing Conference (EUSIPCO), A Coruna, Spain, 2019

- Nousi, D. Triantafyllidou, A.Tefas and I.Pitas, “Joint Lightweight Object Tracking and Detection for Unmanned Vehicles”, Proceedings of the IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 2019

- Mademlis, A. Torres-Gonzalez, J. Capitan, R. Cunha, B. Guerreiro, A. Messina, F. Negro, C. Le Barz, T. Goncalves, A.Tefas, N.Nikolaidis and I.Pitas, “A Multiple-UAV Software Architecture for Autonomous Media Production”, Proceedings of the 27th European Signal Processing Conference (EUSIPCO), Satellite Workshop: Signal Processing, Computer Vision and Deep Learning for Autonomous Systems, A Coruna, Spain, 2019

- Mademlis, P. Nousi, C. Le Barz, T. Goncalves and I.Pitas, “Communications for Autonomous Unmanned Aerial Vehicle Fleets in Outdoor Cinematography Applications”, Proceedings of the 27th European Signal Processing Conference (EUSIPCO), Satellite Workshop: Signal Processing, Computer Vision and Deep Learning for Autonomous Systems, A Coruna, Spain, 2019

- Cunha, M. Malaca, V. Sampaio, B. Guerreiro, P. Nousi, I. Mademlis, A.Tefas and I.Pitas, “Gimbal Control for Vision-based Target Tracking”, Proceedings of the 27th European Signal Processing Conference (EUSIPCO), Satellite Workshop: Signal Processing, Computer Vision and Deep Learning for Autonomous Systems, A Coruna, Spain, 2019

- Nousi, I. Mademlis, I. Karakostas, A.Tefas and I.Pitas, “Embedded UAV Real-Time Visual Object Detection and Tracking”, Proceedings of the IEEE International Conference on Real-time Computing and Robotics 2019 (RCAR2019), Irkutsk, Russia, 2019

- Symeonidis, I. Mademlis, N.Nikolaidis and I.Pitas, “Improving Neural Non-Maximum Suppression for Object Detection by Exploiting Interest-Point Detectors”, Proceedings of IEEE International Workshop on Machine Learning for Signal Processing (MLSP), Pittsburgh, PA, USA, 2019

- Mygdalis, A. Iosifidis, A. Tefas, I. Pitas, “Semi-Supervised Subclass Support Vector Data Description for Image and Video Classification”, Neurocomputing (Elsevier), vol. 278, pp. 51-61, 2018

- Tsapanos, A. Tefas, N. Nikolaidis and I. Pitas, “Neurons With Paraboloid Decision Boundaries for Improved Neural Network Classification Performance”, IEEE Transactions on Neural Networks and Learning Systems (TNNLS), vol. 30, no. 1, pp. 284-294, 2018

- Mygdalis, A.Tefas and I.Pitas, “K-Anonymity-inspired Adversarial Attack and Multiple One-class Classification Defense”, Neural Networks, Elsevier, vol. 124, pp. 296-307, 2020.

- Nousi, S. Papadopoulos, A.Tefas and I.Pitas, “Deep autoencoders for attribute preserving face de-identification”, Elsevier Signal Processing: Image Communication, vol. 81, pp. 115699, 2020.

- Nousi, E. Patsiouras, A. Tefas, I. Pitas, “Convolutional Neural Networks for Visual Information Analysis with Limited Computing Resources”, Proceedings of the IEEE International Conference on Image Processing (ICIP), Athens, Greece, 2018

- Mademlis, V. Mygdalis, N. Nikolaidis, I. Pitas, “Challenges in Autonomous UAV Cinematography: An Overview”, Proceedings of the IEEE International Conference on Multimedia and Expo (ICME), San Diego, USA, 2018

- Mygdalis, A. Tefas, I. Pitas, “Learning Multi-Graph Regularization for SVM Classification”, Proceedings of the IEEE International Conference on Image Processing (ICIP), Athens, Greece, 2018

- Karakostas, I. Mademlis, N. Nikolaidis, I. Pitas, “UAV Cinematography Constraints Imposed by Visual Target Trackers”, Proceedings of the IEEE International Conference on Image Processing (ICIP), Athens, Greece, 2018

- Passalis, A. Tefas, I. Pitas, “Efficient Camera Control Using 2D Visual Information for Unmanned Aerial Vehicle-based Cinematography”, Proceedings of the International Symposium on Circuits and Systems (ISCAS), Florence, Italy, 2018

- Chriskos, R. Zhelev, V. Mygdalis, I. Pitas, “Quality Preserving Face De-Identification Against Deep CNNs”, Proceedings of the IEEE International Workshop on Machine Learning for Signal Processing (MLSP), Aalborg, Denmark, September 2018

- Chriskos, O. Zoidi, A. Tefas and I.P itas, “De-identifying Facial Images Using Singular Value Decomposition and Projections”, Multimedia Tools and Applications (Springer), vol. 76, no. 3, pp. 3435-3468, 2017

- Chriskos, J. Munro, V. Mygdalis, I. Pitas, Face Detection Hindering, Proceedings of the IEEE Global Conference on Signal and Information Processing (GlobalSIP), Montreal, Canada, 2017

- O. Zachariadis, V. Mygdalis, I. Mademlis, I. Pitas, “2D Visual Tracking for Sports UAV Cinematography Applications”, Proceedings of the IEEE Global Conference on Signal and Information Processing (GlobalSIP), Montreal, Canada, 2017

Material to be distributed to attendees

The presentations slides (in PDF) can be downloaded by the tutorial registrants.

Sponsor

The tutorial is sponsored by the Horizon2020 AERIAL-CORE R&D Project.

Relevant links

Prof. I. Pitas Google scholar link: https://scholar.google.gr/citations?user=lWmGADwAAAAJ&hl=el

AERIAL-CORE R&D Project: https://aerial-core.eu/

Multidrone R&D project: https://multidrone.eu/

AIIA Lab, Aristotle University of Thessaloniki: http://www.aiia.csd.auth.gr/EN/