Applications focus on autonomous/self-driving cars, marine vehicles and drones

DESCRIPTION

This two-day short course provides an overview and in-depth presentation of the various computer vision and deep learning problems encountered in autonomous systems perception, e.g. in drone imaging or autonomous car vision. It consists of two parts (A, B) and each of them includes up to 8 one-hour lectures.

Part A lectures (6-8 hours) provide an in-depth presentation to autonomous systems imaging and the relevant architectures as well as a solid background on the necessary topics of computer vision (Image acquisition, camera geometry, Stereo and Multiview imaging, Mapping and Localization) and machine learning (Introduction to neural networks, Perceptron, backpropagation, Deep neural networks, Convolutional NNs).

Part B lectures (6-8 hours) provide in-depth views of the various topics encountered in autonomous systems perception, ranging from vehicle localization and mapping, to target detection and tracking, autonomous systems communications and embedded CPU/GPU computing. Part B also contains application-oriented lectures on autonomous drones, cars and marine vessels (e.g. for land/marine surveillance, search&rescue missions, infrastructure/building inspection and modeling, cinematography).

WHEN?

The course will take place on 6-7 October 2021.

WHERE?

The course will take place online.

PROGRAM

| Time*/date | 6/10/2021 | 7/10/2021 |

| 08:00 – 09:00 | Registration | Registration |

| 09:00 – 10:00 | Introduction to autonomous systems imaging | Simultaneous Localization and Mapping |

| 10:00 – 11:00 | Digital Image and Videos | Neural Slam |

| 11:00 – 11:30 | Coffee break | Coffee break |

| 11:30 – 12:30 | Camera geometry | Deep Object Detection |

| 12:30 – 13:30 | Stereo and Multiview imaging | 2D Visual Object Tracking |

| 13:30 – 14:30 | Lunch break | Lunch break |

| 14:30 – 15:30 | Introduction to Artificial Neural Networks. Perceptron | Drone mission planning and control |

| 15:30 – 16:30 | Multilayer perceptron. Backpropagation | Introduction to car vision |

| 16:30 – 17:00 |

Coffee break |

Coffee break |

| 17:00 – 18:00 |

Deep neural networks. Convolutional NNs | Introduction to autonomous marine vehicles |

| 18:00 – 19:00 |

Introduction to multiple drone imaging | CVML Software development tools |

*Eastern European Summer Time (EEST)

**This programme is indicative and may be modified without prior notice by announcing (hopefully small) changes in lectures/lecturers.

***Each topic will include a 45-minute lecture and a 15-minute break.

REGISTRATION

——————————————————————————————————————————————————–

Early registration (till 2/08/2021):

• Standard: 300 Euros

• Unemployed or Undergraduate/MSc/PhD student*: 200 Euros

Later or on-site registration (after 2/08/2021):

• Standard: 350 Euros

• Unemployed or Undergraduate/MSc/PhD student*: 250 Euros

*Proof of unemployment or student status should be provided upon registration.

After the completion of your payment, please fill in the form below:

_______________________________________________________________

Lectures will be in English. PDF slides will be available to course attendees.

A certificate of attendance will be provided.

***Due to the special COVID-19 circumstances, the 2021 edition of the «Short course on Deep Learning and Computer Vision for Autonomous Systems» will take place as live web course on 6-7 October 2021 (default mode). Lectures will be prerecorded to facilitate attendees in case they experience problems due to time difference. Remote participation will be available via teleconferencing.***

Cancelation policy:

- 50% refund for cancelation up to 15/07/2021

- 0% refund afterwards

Every effort will be undertaken to run the course as planned. However, due to the special COVID-19 circumstances, the organizer (AUTH) reserves right to cancel the event anytime by simple notice to the registrants (by email by announcing it in the course www page). In this case, each registrant will be reimbursed 100% for the registration fee. However, the organizer will be not held liable for any other loss incurred to the registrants (e.g., for air tickets, hotels or any other travel arrangements).

LECTURERS

Prof. Ioannis Pitas (IEEE Fellow, IEEE Distinguished Lecturer, EURASIP fellow) received the Diploma and Ph.D. degree in Electrical Engineering, both from the Aristotle University of Thessaloniki, Greece. Since 1994, he has been a Professor at the Department of Informatics of the same University. His current interests are in the areas of image/video processing, machine learning, computer vision, intelligent digital media, human-centered interfaces, affective computing, 3D imaging, and biomedical imaging. He has published over 920 papers, contributed in 45 books in his areas of interest and edited or (co-)authored another 11 books. He has also been member of the program committee of many scientific conferences and workshops. In the past he served as Associate Editor or co-Editor of 9 international journals and General or Technical Chair of 4 international conferences. He participated in 71 R&D projects, primarily funded by the European Union and is/was principal investigator/researcher in 41 such projects. He leads International AI Doctoral Academy (AIDA) and is PI in Horizon2020 EU funded R&D projects AI4Media (1 of the 4 AI flagship projects in Europe) and AerialCore. He has 33300+ citations to his work and h-index 86+.

Prof. Pitas lead the big European H2020 R&D project MULTIDRONE and is principal investigator (AUTH) in H2020 projects Aerial Core and AI4Media. He is chair of the Autonomous Systems initiative https://ieeeasi.signalprocessingsociety.org/.

Professor Pitas will deliver 16 lectures on deep learning and computer vision.

Educational record of Prof. I. Pitas: He was Visiting/Adjunct/Honorary Professor/Researcher and lectured at several Universities: University of Toronto (Canada), University of British Columbia (Canada), EPFL (Switzerland), Chinese Academy of Sciences (China), University of Bristol (UK), Tampere University of Technology (Finland), Yonsei University (Korea), Erlangen-Nurnberg University (Germany), National University of Malaysia, Henan University (China). He delivered 90 invited/keynote lectures in prestigious international Conferences and top Universities worldwide. He run 17 short courses and tutorials on Autonomous Systems, Computer Vision and Machine Learning, most of them in the past 3 years in many countries, e.g., USA, UK, Italy, Finland, Greece, Australia, N. Zealand, Korea, Taiwan, Sri Lanka, Bhutan.

http://www.aiia.csd.auth.gr/LAB_PEOPLE/IPitas.php

https://scholar.google.gr/citations?user=lWmGADwAAAAJ&hl=el

TOPICS

6/10/2021 – Part A (first day, 8 lectures):

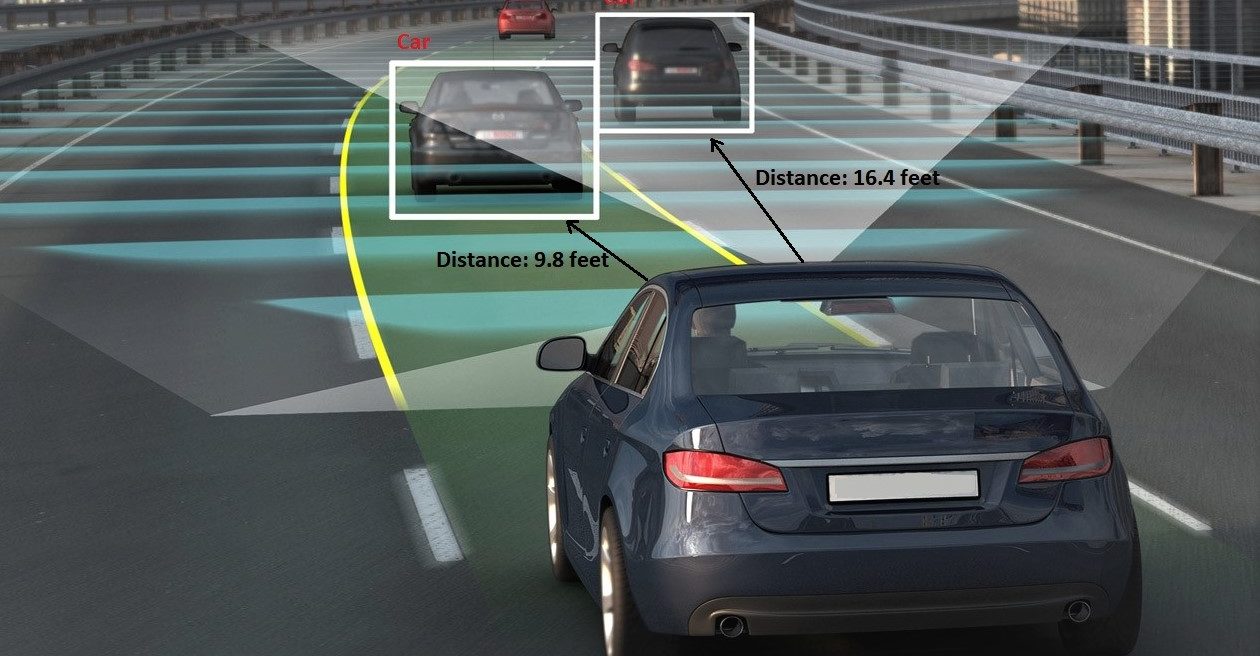

1. Introduction to autonomous systems imaging

Abstract: This lecture will provide an introduction and the general context for this new and emerging topic, presenting the aims of autonomous systems imaging and the many issues to be tackled, especially from an image/video analysis point of view as well as the limitations imposed by the system’s hardware. Applications on autonomous cars, drones or marine vessels will be overviewed.

Lecture material: Download

2. Digital Image and Video

3. Camera geometry

4. Stereo and Multiview imaging

5. Introduction to Artificial Neural Networks. Perceptron

6. Multilayer perception. Backpropagation

7. Deep neural networks. Convolutional NNs

Abstract: From multilayer perceptrons to deep architectures. Fully connected layers. Convolutional layers. Tensors and mathematical formulations. Pooling. Training convolutional NNs. Initialization. Data augmentation. Batch Normalization. Dropout. Deployment on embedded systems. Lightweight deep learning.

Sample Lecture material: Download

8. Introduction to multiple drone imaging

Abstract: This lecture will provide the general context for this new and emerging topic, presenting the aims of drone vision, the challenges (especially from an image/video analysis and computer vision point of view), the important issues to be tackled, the limitations imposed by drone hardware, regulations and safety considerations etc. An overview of the use of multiple drones in media production will be made. The three use scenaria, the challenges to be faced and the adopted methodology will be discussed at the first part of the lecture, followed by scenario-specific, media production and system platform requirements. Multiple drone platform will be detailed during the second part of the lecture, beginning with platform hardware overview, issues and requirements and proceeding by discussing safety and privacy protection issues. Finally, platform integration will be the closing topic of the lecture, elaborating on drone mission planning, object detection and tracking, UAV-based cinematography, target pose estimation, privacy protection, ethical and regulatory issues, potential landing site detection, crowd detection, semantic map annotation and simulations

7/10/2021 – Part B (second day, 8 lectures):

1.Simultaneous Localization and Mapping

2. Neural Slam

4. 2D Visual Object Tracking

5. Drone mission planning and control

Abstract: In this lecture, first the audiovisual shooting mission is formally defined. The introduced audiovisual shooting definitions are encoded in mission planning comannds, i.e., navigation and shooting action vocabulary, and their corresponding parameters. The drone mission commands, as well as the hardware/software architecture required for manual/autonomous mission execution are described. The software infrastructure includes the planning modules, that assign, monitor and schedule different behaviours/tasks to the drone swarm team according to director and enviromental requirements, and the control modules, which execute the planning mission by translating high-level commands to intro desired drone+camera configurations, producing commands for autopilot, camera and gimbal of the drone swarm.

6. Introduction to car vision

Abstract: In this lecture, an overview of the autonomous car technologies will be presented (structure, HW/SW, perception), focusing on car vision. Examples of the autonomous vehicle will be presented as a special case, including its sensors and algorithms. Then, an overview of computer vision applications in this autonomous vehicle will be presented, such as visual odometry, lane detection, road segmentation, etc. Also, the current progress of autonomous driving will be introduced.

7. Introduction to autonomous marine vehicles

Abstract: Autonomous marive vehicles can be described as surface (boats, ships) and unterwater ones (submarines). They have many applications in marine transportations, marine/submarine surveillance and many challenges in environment perception/mapping and vehicle control, to be reviewed in this lecture.

8. CVML Software development tools

AUDIENCE

Any engineer or scientist practicing or student having some knowledge of computer vision and/or machine learning, notable CS, CSE, ECE, EE students, graduates or industry professionals with relevant background.

IF I HAVE A QUESTION?

PAST COURSE EDITION

——————————————————————————————————————

2020

Participants: 21

Countries: Belgium, Ireland, Greece, Finland, China, Italy, France, Croatia, Spain, United Kingdom

Registrants comments:

- “Very interesting lecture topics regarding the autonomous systems perception.”

- The overall course was quite interesting and fulfilling in terms of the context promised.”

- “The lectures were very appealing and satisfactorily delivered.”

——————————————————————————————————————

Participants: 39

Countries: United Kingdom, Scotland, Germany, Italy, Norway, Slovakia, Spain, Croatia, Czech Republic, Greece

Registrants comments:

- “Very adequate information about the topics of DL, CV and autonomous systems.”

- “Very good coverage of autonomous systems vision perception.”

- “Course’s content was greatly explanatory with many application examples.”

- “Very well structured course, knowledgeable lecturers.”

——————————————————————————————————————

SPONSORS

Sponsored by:

AerialCore, https://aerial-core.eu/

If you want to be our sponsor send us an email here: koroniioanna@csd.auth.gr

SAMPLE COURSE MATERIAL & RELATED LITERATURE

1.) C. Regazzoni, I. Pitas, ‘Perspectives in Autonomous Systems research’, Signal Processing Magazine, September 2019

2.) Artificial neural networks

3.) 3D Shape Reconstruction from 2D Images

4.) Overview of self-driving car technologies

5.) I. Pitas, ‘3D imaging science and technologies’, Amazon CreateSpace preprint, 2019

6.) R. Fan, U. Ozgunalp, B. Hosking, M. Liu, I. Pitas, “Pothole Detection Based on Disparity Transformation and Road Surface Modeling“, IEEE Transactions on Image Processing (accepted for publication 2019).

7.) Rui Fan, Xiao Ai, Naim Dahnoun, “Road Surface 3D Reconstruction Based on Dense Subpixel Disparity Map Estimation“, IEEE Transactions on Image Processing, vol 27, no. 6, June 2018

8.) Umar Ozganalp, Rui Fan, Xiao Ai, Naim Dahnoun, “Multiple Lane Detection Algorithm Based on Novel Dense Vanishing Point Estimation“, IEEE Transactions on Intelligent Transportation Systems, vol. 18, no.3, March 2017

9.) Rui Fan, Jianhao Jiao, Jie Pan, Huaiyang Huang, Shaojie Shen, Ming Liu, “Real-Time Dense Stereo Embedded in A UAV for Road Inspection“, Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 2019

10.) Semi-Supervised Subclass Support Vector Data Description for image and video classification, V. Mygdalis, A. Iosifidis, A. Tefas, I. Pitas, Neurocomputing, 2017

11.) Neurons With Paraboloid Decision Boundaries for Improved Neural Network Classification Performance, N. Tsapanos, A. Tefas, N. Nikolaidis and I. Pitas IEEE Transactions on Neural Networks and Learning Systems (TNNLS), 14 June 2018, pp 1-11

12.) Convolutional Neural Networks for Visual Information Analysis with Limited Computing Resources, P. Nousi, E. Patsiouras, A. Tefas, I. Pitas, 2018 IEEE International Conference on Image Processing (ICIP), Athens, Greece, October 7-10, 2018

13.) Learning Multi-graph regularization for SVM classification, V. Mygdalis, A. Tefas, I. Pitas, 2018 IEEE International Conference on Image Processing (ICIP), Athens, Greece, October 7-10, 2018

14.) Quality Preserving Face De-Identification Against Deep CNNs, P. Chriskos, R. Zhelev, V. Mygdalis, I. Pitas 2018 IEEE International Workshop on Machine Learning for Signal Processing (MLSP), Aalborg,

Denmark, September 2018

15.) P. Chriskos, O. Zoidi, A. Tefas, and I. Pitas, “De-identifying facial images using singular value decomposition and projections”, Multimedia Tools and Applications, 2016

16.) Deep Convolutional Feature Histograms for Visual Object Tracking, P. Nousi, A. Tefas, I. Pitas, IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2019

17.) Exploiting multiplex data relationships in Support Vector Machines, V. Mygdalis, A. Tefas, I. Pitas, Pattern Recognition 85, pp. 70-77, 2019

USEFUL LINKS

•Prof. Ioannis Pitas: https://scholar.google.gr/citations?user=lWmGADwAAAAJ&hl=el

•Department of Computer Science, Aristotle University of Thessaloniki (AUTH): https://www.csd.auth.gr/en/

•Laboratory of Artificial Intelligence and Information Analysis: http://www.aiia.csd.auth.gr/

•Thessaloniki: https://wikitravel.org/en/Thessaloniki